For years, the advance of artificial intelligence and human augmentation has been forensically debated by three broad factions.

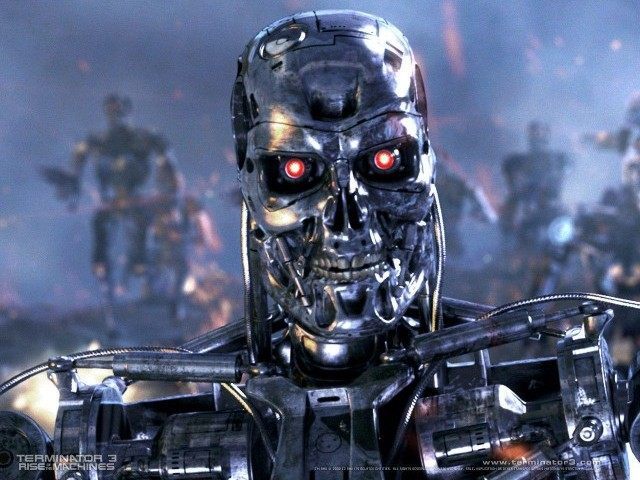

On the one side are optimists, who believe in the limitless possibilities of technology to liberate humanity. On the other side are pessimists, who believe that artificial intelligence will lead to war, political strife, and even a Terminator-style battle between machines and men. Then there are the skeptics, who believe the predictions of both sides to be hyperbolic nonsense.

It’s a debate that will grow louder as the pace of technological change increases. Recently, IBM hired 2,000 new consultants to build and sell services from its “Watson” supercomputer, arguably the closest thing humanity has to genuine A.I.

Watson is the same machine that astonished onlookers in 2011 when it beat two humans on a special episode of Jeopardy! It has encyclopedic knowledge, superhuman data analysis skills, and is capable of both understanding and talking in natural language. As its Jeopardy! win shows, it’s also capable of unravelling complex verbal puzzles, analysing words and connecting them to millions of possibilities in its vast bank of data at superhuman speeds.

IBM is a firm believer in the potential of Watson-like machines to solve everything from the problems of a local retail business to global healthcare challenges. Their new investment is the first consultancy dedicated to what they call “Cognitive Computing” – another word for artificial intelligence.

The company predicts that demands for the problem-solving abilities of supercomputers will grow rapidly in the next five years. Stephen Pratt, global leader of the new IBM consulting branch, says, “Before long, we will look back and wonder how we made important decisions or discovered new opportunities without systematically learning from all available data.”

As the age of intelligent machines dawns, it will add fuel to a previously esoteric between philosophers, scientists, and fururists about what the future will look like. On the one hand are optimists, who believe in the limitless capacity of smart machines to solve problems including ignorance, disease, and even death itself. They believe that artificial intelligence will one day surpass human intelligence, and in the process overcome all the challenges that humanity has yet to conquer. To them, IBM’s recent investments in cognitive computing are just further proof that utopia is on the way.

Pessmists, by contrast, do not doubt the limitless possibilities, but they forsee much grimmer implications for humanity: political strife, violence, and the possible obsolescence of humanity. Some even believe that the divide between pro-A.I factions and anti-A.I factions in society could lead to widespread civil wars.

Skeptics, meanwhile, believe both sides of the debate are barking mad and wildly overestimate the potential of A.I.

THE OPTIMISTS

If the optimists have a prophet, it’s Google’s commander-in-chief of A.I.: futurist and inventor Ray Kurzweil. Kurzweil worked on one of the very first computers in the 1950s and went on to pioneer advances in machine intelligence, including the first computer program capable of recognising human text in any font. He later combined this technology with a text-to-speech synthesizer to create the Kurzweil Reading Machine, the first machine allowing blind people to have written text read to them by a computer.

Beyond his impressive track record, what makes Kurzweil an icon to optimists is his range of Nostradamus-like predictions about the future of humanity. Many of these seemed bizarre and outlandish when they were originally made but were late proven correct. Kurzweil predicted that by the early 2000s, internet use would be widespread (correct), and that by the late 1990s, a computer would beat a grandmaster at chess (correct – IBM’s “Deep Blue,” a predecessor of Watson, defeated Gary Kasparov at chess in 1997.) Kurzweil’s predictions even have their own Wikipedia page.

Kurzweil’s most controversial prediction is that a “Technological Singularity” will occur within the next half-century. Kurzweil believes that exponential growth in the processing power of computers will by 2045 lead to a moment of accelleration where machine intelligence speeds past that of humanity, with unpredictable results. Kurzweil believes that once machines have moved past human intelligence in this manner, it will only be a matter of time before they tackle the problems that human minds are incapable of solving: war, disease, political conflict, and – most controversially – mortality.

Once again: Kurzweil believes that humans, with the assistance of machines, will become immortal within the next century. It’s hard to imagine a more optimistic prediction.

In other circumstances, these predictions would sound like the ramblings of a madman or a sci-fi writer having a brainstorm. But because Kurzweil has so often been correct in his predictions, and because of his ironclad credentials as one of America’s leading technological pioneers, he has not been dismissed as a madman.

Indeed, a whole network has emerged in preparation for the Singularity. “Singularity University,” set up in 2008, says its aim is to “educate, inspire and empower leaders to apply exponential technologies to address humanity’s grand challenges.” The Machine Intelligence Research Institute, while not mentioning the Singularity directly, acknowledges that “researchers largely agree that A.I. is likely to begin outperforming humans on most cognitive tasks in this century.”

There are even signs of an ideology forming around this optimistic vision of the future. Zolstan Istvan, a futurist, philosopher, and sci-fi author, is currently running for President of the United States on the first “Transhumanist Party” ticket. Istvan has stated that if elected, his primary goal would be to help scientists and technologists “overcome death and aging within 15-20 years, a goal an increasing number of leading scientists think is reachable.” CNET calls Istvan “The only Presidential candidate promising immortality.”

THE SKEPTICS

Needless to say, not everyone believes that superintelligent robots will make us immortal within the next 20 years.

Biologists and evolutionary psyschologists, who study the evolved instincts of human beings, are especially skeptical of the idea that human-like machine intelligence can be achieved soon, if ever. “I think were [Kurzweil] a biologist, he would be more moderate in his extrapolations of the uses of our technology,” says Neurobiology professor William B. Hurlbut. “Engineering a better human being is going to be a daunting task. We’ve had five million years of field testing, and that has filtered down to an organism that is very attuned to a range of environments and a range of talents and a range of possibilities.”

Steven Pinker, the world-leading Harvard psychologist, goes even further. “There is not the slightest reason to believe in a coming singularity,” Pinker told IEEE Spectrum in 2008. “The fact that you can visualize a future in your imagination is not evidence that it is likely or even possible. Look at domed cities, jet-pack commuting, underwater cities, mile-high buildings, and nuclear-powered automobiles–all staples of futuristic fantasies when I was a child that have never arrived. Sheer processing power is not a pixie dust that magically solves all your problems.”

It’s easy to see where they’re coming from. Human intelligence is a biological process that evolved over millions of years. The idea that it could be surpassed in just over a century (the time between the emergence of the first computers and Kurzweil’s predicted Singularity) by a machine that has no biology to speak of, sounds far-fetched.

Proponents of the singularity contend that technological progress is an extension of evolution, not an attempt to rebuild imitation humans from scratch. Joan Slonczewski suggests that technology is a process of outsourcing. “We invented writing, printing and computers to store our memories” writes Slonczewski “Most of us can no longer recall a seven-digit number long enough to punch it into a phone. Now we invent computers to beat us at chess and Jeopardy, and baby-seal robots to treat hospital patients.” Taken this way, it’s easier to imagine humans gradually passing their knowledge, skills, and abilities onto machines, until the machines become indistinguishable from us – or, as in the case of Deep Blue and Watson – even surpass us.

THE PESSIMISTS

The third major faction in the A.I. debate are the pessimists. They are often cut from a similar cloth to the optimists: they’re futurists, computer scientists, and academics. They accept the optimists’ predictions about the trajectory of artificial intelligence, but they have a much darker vision of the consequences.

The most famous of these, of course, is the so-called Terminator scenario, in which clever machines decide that humans are too dangerous (either to themselves or to the robots) to be left to their own devices, and stage a global takeover. Elon Musk, CEO of SpaceX and an investor in A.I., has openly worried about such a scenario.

The source of the fear is twofold. On the one hand, artificial intelligence will be designed to solve problems. On the other hand, once machines reach a superintelligent, post-singularity state, it’s difficult to precisely predict how they will use their intelligence, even if humans remain in command of them.

“When we create a superintelligent entity,” warns futurist philosopher Nick Bostrom, “we might make a mistake and give it goals that annihilate mankind… We tell it to solve a mathematical problem, and it responds by turning all matter in the solar system into a giant calculating device.” As A.I. researcher Eliezer Yudkowsky puts it, to a superintelligent machine, “The A.I. does not hate you, nor does it love you, but you are made out of atoms which it can use for something else.”

It’s the other side of the coin to Kurzweil’s singulatiy: if machines become smart enough to solve the existential problems of humanity, they’ll also be smart enough to create new ones.

Another pessimistic prediction is that humanity will tear itself apart over the mere idea of superintelligent A.I. before it even arrives. Hugo de Garis, a former A.I. researcher, has predicted a global conflict between the supporters of A.I. and their opponents. According to de Garis, the question of whether or not to create artificial beings with superior intellects to humans will lead to a zero-sum ideological split across humanitty – worse even than the divide between communist and capitalist countries in the 20th century.

Writing in Forbes, de Garis explains the questions that he believe will divide humanity in the future: “Millions of people will be asking such questions as: Can the machines become smarter than humans? Is that a good thing? Should there be a legislated upper limit to machine intelligence? Can the rise of machine intelligence be stopped? What if China’s soldier robots are smarter than America’s solder robots? And so on and so forth.”

De Garis thinks that the divides caused by these questions will ultimately lead to a global conflict, which he calls the Artilect War: “This will be the worst, most passionate war that humanity has ever known, because the stakes – the survival of our species – have never been so high. The scale of killing will not be in the millions… but in the billions.”

Accelerating Future

The people who think and write about the future of artificial intelligence certainly can’t be accused of lacking imagination. Indeed, many of the future scenarios envisaged by A.I. researchers end up as sci-fi books or movies. Films like Ex Machina continue to appeal to society’s growing unease regarding the rise of machines that may one day be smarter than us. But A.I. researchers aren’t writing sci-fi — they genuinely believe that the dramatic scenarios they describe will come about.

Follow Allum Bokhari @LibertarianBlue on Twitter.

Breitbart Tech is a new vertical from Breitbart News covering tech, gaming and internet culture. Bookmark breitbart.com/tech and follow @BreitbartTech on Twitter and Facebook.

COMMENTS

Please let us know if you're having issues with commenting.