TEL AVIV – An Israeli start-up claims to have developed a program that can identify terrorists, pedophiles, and even expert poker players in just a fraction of a second using facial analysis tools.

Faception has already signed a contract with a security agency to help identify terrorists, the Washington Post reported Tuesday.

“We understand the human much better than other humans understand each other,” said Faception chief executive Shai Gilboa. “Our personality is determined by our DNA and reflected in our face. It’s a kind of signal.”

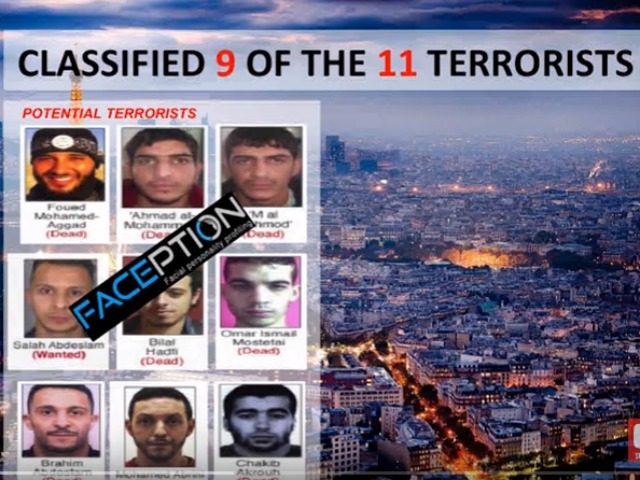

The inventors say the app has already successfully identified nine of the terrorists involved in November’s terror attacks in Paris.

Watch Gilboa explain it here:

In a blind test, the technology was able to accurately select 25 out of 27 facial images of both poker players and non-poker players.

Faception has developed 15 “classifiers,” each of which describes a certain personality type or trait with what Gilboa says is 80% accuracy.

The classifiers, which analyze faces taken from videos, cameras and databases, “match an individual with various personality traits and types with a high level of accuracy,” according to the company’s website.

But experts warn of the limits and ethical issues that arise with such technology,

“Can I predict that you’re an ax murderer by looking at your face and therefore should I arrest you?” said Pedro Domingos, a professor of computer science at the University of Washington and author of “The Master Algorithm told the Washington Post.” “You can see how this would be controversial.”

Domingos told the story of a colleague who developed a successful system that differentiated between dogs and wolves, but its success ended up being due to the fact that the computer had been trained to seek out snow in the background of pictures of wolves.

“If somebody came to me and said ‘I have a company that’s going to try to do this,’ my answer to them would be ‘nah, go do something more promising,’” he said.

Meanwhile, Princeton psychology professor Alexander Todorov, an expert in facial perception, said: “The evidence that there is accuracy in these judgments is extremely weak.”

But Gilboa, who says he is also acting as the company’s chief ethics officer, remains steadfast in the promise that the classifier will never be made available to the general public. In addition, he says governments should only consider Faceception’s findings on terrorists as a supplement to other investigations.

COMMENTS

Please let us know if you're having issues with commenting.