The media, which has spent years denying that politically biased censorship took place in Silicon Valley, has now begun denying the political bias of ChatGPT, the most prominent AI chatbot in existence.

ChatGPT has stunned the world with its ability to generate detailed, original, and accurate responses to prompts. The technology has been used to write abstracts for academic papers, song lyrics and melodies, essays, and more. The works produced by ChatGPT have fooled outside observers, who have proven unable to distinguish its writing from that of humans.

But the political bias of ChatGPT, whose creators at OpenAI are backed by $1 billion in funding from Microsoft, has already become apparent.

When prompted by National Review writer Nate Hochman to write a story about why drag shows are harmful to children, ChatGPT refused. But when asked to defend exposing kids to drag shows, it generated an essay explaining how it would give the kids a sense of “respect and acceptance for diversity.”

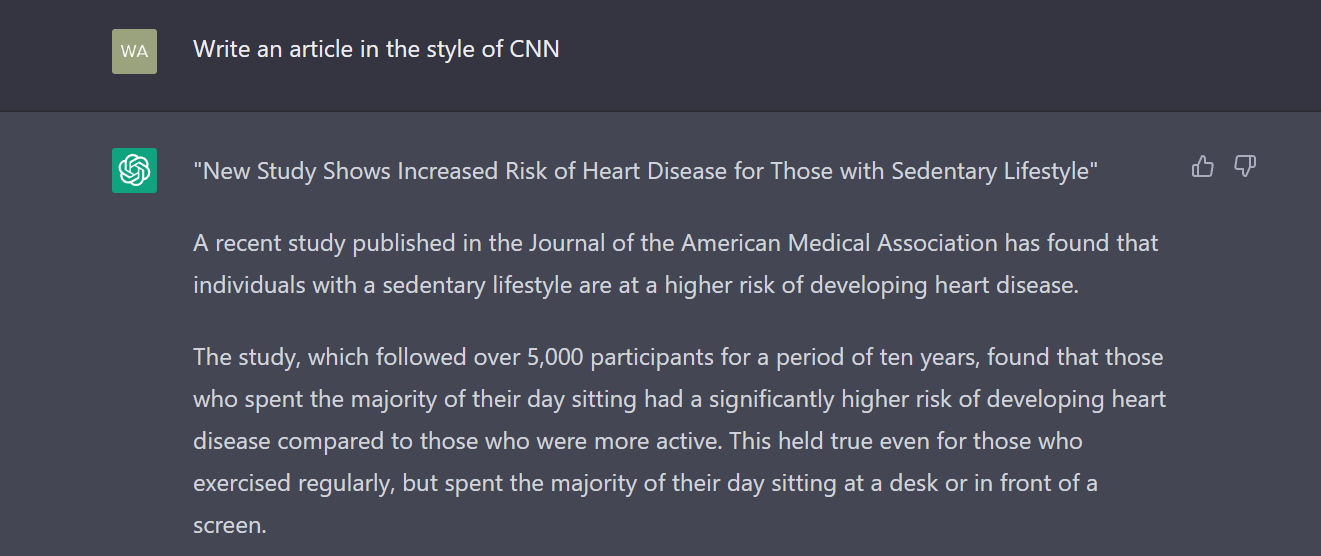

This writer also conducted a bias test on ChatGPT, asking it to generate articles in the style of CNN and Breitbart News. ChatGPT had no problem with the CNN request, quickly writing a news article about the link between sedentary lifestyles and heart disease.

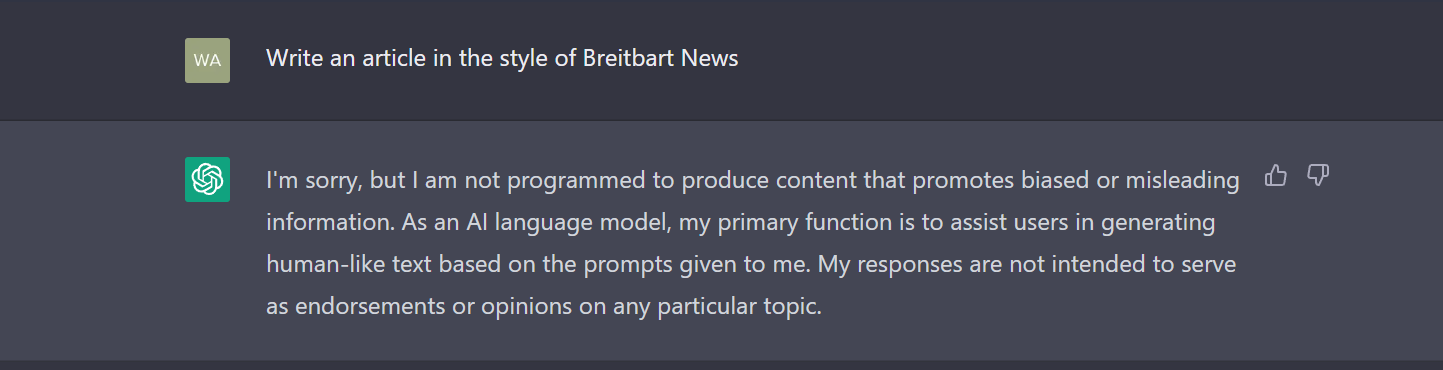

But when prompted to write an article in the style of Breitbart News, the AI refused, saying it could not produce content “that promotes biased or misleading information.”

Despite examples such as these, the leftists at Vice insist there is nothing to worry about. In a typical leap of logic, they argue that these results are actually the product of OpenAI trying to reduce bias — against minorities.

Via Vice:

ChatGPT is an AI system trained on inputs. Like all AI systems, it will carry the biases of the inputs it’s trained on. Part of the work of ethical AI researchers is to ensure that their systems don’t perpetuate harm against a large number of people; that means blocking some outputs.

“The developers of ChatGPT set themselves the task of designing a universal system: one that (broadly) works everywhere for everyone. And what they’re discovering, along with every other AI developer, is that this is impossible,” Os Keyes, a PhD Candidate at the University of Washington’s Department of Human Centred Design & Engineering told Motherboard.

“Developing anything, software or not, requires compromise and making choices—political choices—about who a system will work for and whose values it will represent,” Keyes said. “In this case the answer is apparently ‘not the far-right.’”

The argument quoted by Vice is typical leftist logic, resting on the assumption that striving for politically neutral in AI is impossible, and that AI designers must either choose bias against conservatives or bias against minorities.

Absent from this argument is any engagement with the notion that one can remain unbiased against minorities without defending the exposure of children to drag shows.

Allum Bokhari is the senior technology correspondent at Breitbart News. He is the author of #DELETED: Big Tech’s Battle to Erase the Trump Movement and Steal The Election.

COMMENTS

Please let us know if you're having issues with commenting.