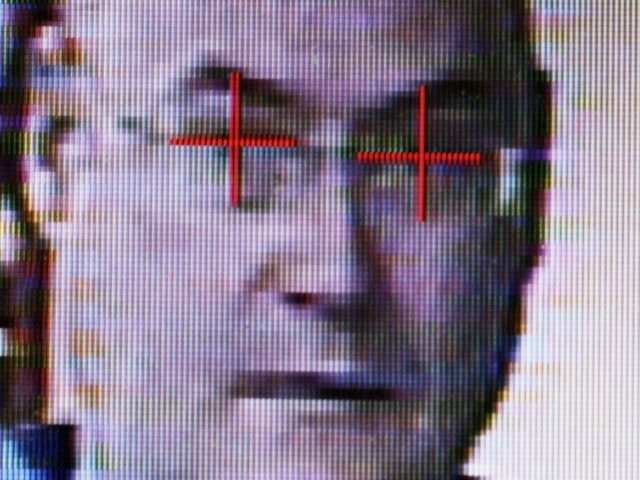

British Police should drop facial recognition software, increasingly used by authorities to monitor the public, as evidence shows it is “almost entirely inaccurate”, campaigners have said.

The Home Office has spent £2.6m funding the technology in South Wales alone, according to a report by the group Big Brother Watch (BBW).

Figures revealed in response to Freedom of Information requests by the group, show that for London’s Metropolitan Police, 98 per cent of “matches” identified by the technology were wrong, the figure standing at 91 per cent for South Wales Police.

Police have been rolling out the software to be used at major events such as sporting fixtures and music concerts, including a Liam Gallagher concert and international rugby games, aiming to identify wanted criminals and people on watch lists.

It was even used by the Metropolitan Police to scan for people on a mental health watch list at the 2017 Remembrance Sunday event in London.

Silkie Carlo, Director of Big Brother Watch, blasted: “Real-time facial recognition is a dangerously authoritarian surveillance tool that could fundamentally change policing in the UK. Members of the public could be tracked, located and identified – or misidentified – everywhere they go.”

We’ve discovered Met plans to target 7 more events this year with authoritarian and dangerously inaccurate facial recognition – after using it at Notting Hill Carnival 2 years in a row with disastrous effect.

This must stop. Join our #FaceOff campaign!https://t.co/rPpduRpaGV pic.twitter.com/xEb5O2iNeR

— Big Brother Watch (@bbw1984) May 15, 2018

He continued: “We’re seeing ordinary people being asked to produce ID to prove their innocence as police are wrongly identifying thousands of innocent citizens as criminals.

“It is deeply disturbing and undemocratic that police are using a technology that is almost entirely inaccurate, that they have no legal power for, and that poses a major risk to our freedoms.

“This has wasted millions in public money and the cost to our civil liberties is too high. It must be dropped.”

During the technology’s use in South Wales, 2,451 out of 2,685 matches were found to be incorrect. Of the remaining 234, there were 110 interventions and 15 arrests.

In London, the Metropolitan Police said there had been 102 false positives, where someone was incorrectly matched to a photo, and only two that were correct.

COMMENTS

Please let us know if you're having issues with commenting.