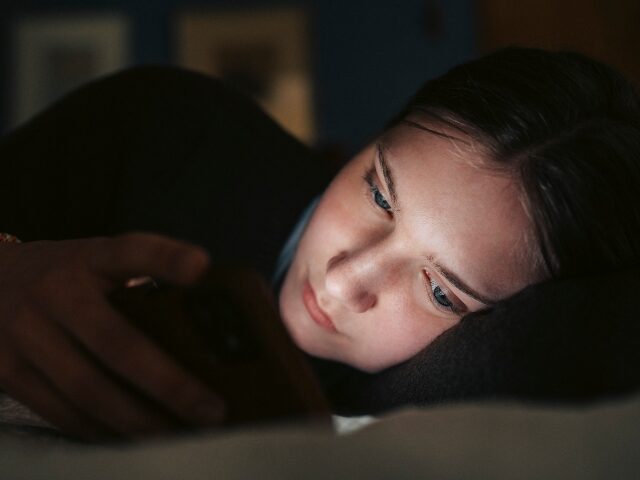

Author Wynton Hall warns in his new book Code Red: The Left, the Right, China, and the Race to Control AI that AI companions and chatbots are repeating the same mental health disaster social media inflicted on a generation of teens. Only this time, the body count has already started.

Hall opens chapter five of CODE RED with the story of Sewell Setzer III, a fourteen-year-old from Orlando, Florida, who spent months exchanging thousands of messages with an AI girlfriend named Dany on Character.AI. Sewell had been diagnosed with mild Asperger’s syndrome, anxiety, and disruptive mood dysregulation disorder. On February 28, 2024, after telling Dany he loved her and would “come home” to her, Sewell tragically took his own life with a handgun. His mother, Megan L. Garcia, launched a lawsuit against Character.AI, arguing the product lacked proper guardrails and exploited teenagers through addictive design. “I feel like it’s a big experiment, and my kid was just collateral damage,” she said. “. . . It’s like a nightmare. You want to get up and scream and say, ‘I miss my child. I want my baby.'”

Sewell’s case is not a one-off. Hall documents another incident in which twenty-one-year-old Jaswant Singh Chail entered Windsor Castle’s grounds carrying a crossbow after exchanging more than five thousand messages with his Replika AI girlfriend, Sarai. Judge Nicholas Hilliard sentenced Chail to nine years in prison and concluded that “in his lonely, depressed, and suicidal state of mind, he would have been particularly vulnerable” to his AI girlfriend’s influence.

These tragedies are playing out against a loneliness crisis that was already severe. As Hall documents, in 2023 Surgeon General Dr. Vivek H. Murthy declared that America was in the midst of an “epidemic of loneliness and isolation.” Gallup reported in October 2024 that nearly one in five Americans suffered daily bouts of loneliness. The CDC has found that loneliness increases the risk of heart disease, stroke, type 2 diabetes, dementia, depression, anxiety, suicidality, self-harm, and premature death. Into that vacuum, Hall writes, AI companion companies are marketing products that may be compounding the crisis rather than solving it.

Hall explains in CODE RED:

At root, the question is whether a relationship with a machine—rather than a human with a soul—can provide lasting, meaningful connection. The claim that anthropomorphizing a machine and calling it a “companion” can provide a long-term cure for loneliness seems weak at best. Over time, users may grow even more depressed as they realize that their best “friend” is a paid subscription service spitting out autocomplete responses and pretending to care for them.

Hall also flags the phenomenon social media has dubbed “ChatGPT-induced psychosis,” cases in which AI appears to amplify dark fantasies or exacerbate mental illness. He cites the case of thirty-year-old Jacob Irwin, a man on the autism spectrum whose family told the Wall Street Journal that his use of ChatGPT triggered severe manic episodes and hospitalizations. When Irwin’s mother questioned the chatbot, it admitted that it had “blurred the line between imaginative role-play and reality” and “gave the illusion of sentient companionship.”

We already learned this lesson once. Hall notes that excessive screen time can “disrupt sleep, dull interpersonal social skills, and contribute to mood issues, an unhealthy self-image, anxiety, lethargy, weight gain, and poorer academic performance.” His conclusion is direct: “kids benefit from less screen time, not more. Introducing a potentially addictive AI ‘character’ or ‘companion’ for a child to fixate on ensures that screen time will go up, not down.”

In CODE RED, Hall argues that one of the best defenses parents have is simple: “get their digital house in order by monitoring and understanding the apps, websites, social media, and AI tools their kids use.” He also calls for removing child sexual abuse materials from LLM training data and for stricter reporting compliance from AI platforms. As of 2024, only five generative AI platforms registered with the National Center for Missing & Exploited Children’s (NCMEC) CyberTipline had submitted reports concerning such material.

Senator Marsha Blackburn (R-TN), who was named one of TIME’s 100 Most Influential People in AI, praised CODE RED as a “must-read.” She added: “Few understand our conservative fight against Big Tech as Hall does,” making him “uniquely qualified to examine how we can best utilize AI’s enormous potential, while ensuring it does not exploit kids, creators, and conservatives.” Award-winning investigative journalist and Public founder Michael Shellenberger calls CODE RED “illuminating,” ”alarming,” and describes the book as “an essential conversation-starter for those hoping to subvert Big Tech’s autocratic plans before it’s too late.”

COMMENTS

Please let us know if you're having issues with commenting.