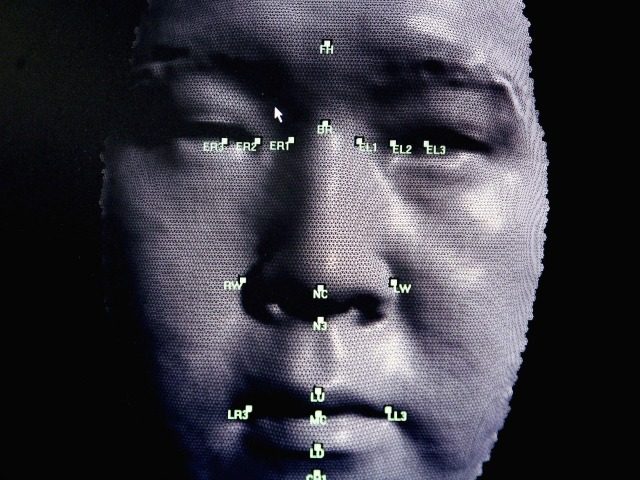

E-commerce giant Amazon has defended its facial recognition software from claims of racial and gender bias following a study by MIT.

BBC News reports that following a study by the Massachusetts Institute of Technology (MIT) which claimed that Amazon’s facial recognition software, known as Rekognition, is both racially and gender biased, the e-commerce giant has defended its software claiming that the study was “misleading.” The study found that while no facial recognition tools were 100 percent accurate, Amazon’s performed the worst at recognizing women with darker skin compared to software by companies such as Microsoft and IBM.

The study by MIT found that Amazon’s software has an error rate of approximately 31 percent when identifying the gender of images of women with dark skin while rival software developed by Kairos had an error rate of 22.5 percent and IBM’s software boasted a rate of just 17 percent. However, software from Amazon, Microsoft, and Kairos successfully identified images of light-skinned men 100 percent of the time.

Facial recognition tools are trained based on large datasets of hundreds of thousands of images, some are now worried that these data sets are not diverse enough. Dr. Matt Wood, the general manager of artificial intelligence at Amazon Web Services, highlighted many of the issues he had with the study in a blog post; noting that amongst a number of other errors, the study did not even use the latest version of Rekognition.

Dr. Wood stated that the study did not align with many of Amazon’s findings and noted that Rekognition used a data library consisting of 12,000 images of men and women across six different ethnicities. Wood wrote: “Across all ethnicities, we found no significant difference in accuracy with respect to gender classification.” Wood also noted that law enforcement officials were told only to use machine-learning facial-recognition results when the results were of a 99 percent certainty or higher.

MIT researcher Joy Buolamwini responded to Wood’s post in her own blog post stating: “Keep in mind that our benchmark is not very challenging. We have profile images of people looking straight into a camera. Real-world conditions are much harder.” Buolamwini continued: “The main message is to check all systems that analyze human faces for any kind of bias. If you sell one system that has been shown to have bias on human faces, it is doubtful your other face-based products are also completely bias free.”

COMMENTS

Please let us know if you're having issues with commenting.