Instagram is rolling out a new AI tool that will supposedly detect whether someone has captioned their Instagram post with “offensive” content. The social media platform says that the tool, which is meant for tackling online bullying, will suggest that users “reconsider” their choice in words if Instagram’s AI has detected offensive language.

“Starting today, we are rolling out a new feature that notifies people when their captions on a photo or video may be considered offensive,” announced Instagram on Monday, adding that the tool will then give the offending user “a chance to pause and reconsider their words before posting.”

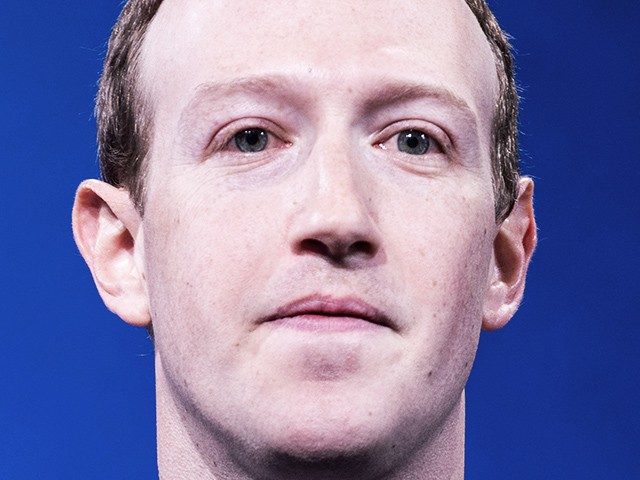

The Facebook-owned social media platform says that its new tool is designed to prevent bullying on the platform, and has already been rolled out in “select countries” — but did not specify which ones.

Regardless, Instagram says that the new tool will be implemented globally within a few months.

“As part of our long-term commitment to lead the fight against online bullying, we’ve developed and tested AI that can recognize different forms of bullying on Instagram,” elaborated the social media site in its announcement.

“Earlier this year, we launched a feature that notifies people when their comments may be considered offensive before they’re posted,” added Instagram.

In July, Instagram had actually implemented this feature — which performed the same functions — but it affected comments left on other users’ posts, rather than captions on a user’s own Instagram post.

“Results have been promising, and we’ve found that these types of nudges can encourage people to reconsider their words when given a chance,” said the social media platform.

“Today, when someone writes a caption for a feed post and our AI detects the caption as potentially offensive, they will receive a prompt informing them that their caption is similar to those reported for bullying,” added Instagram. “They will have the opportunity to edit their caption before it’s posted.”

Instagram also suggested that “in addition to limiting” bullying, the tool also helps to “educate” users on what type of language is considered offensive.

“In addition to limiting the reach of bullying, this warning helps educate people on what we don’t allow on Instagram, and when an account may be at risk of breaking our rules,” said Instagram.

You can follow Alana Mastrangelo on Twitter at @ARmastrangelo, and on Instagram.

COMMENTS

Please let us know if you're having issues with commenting.