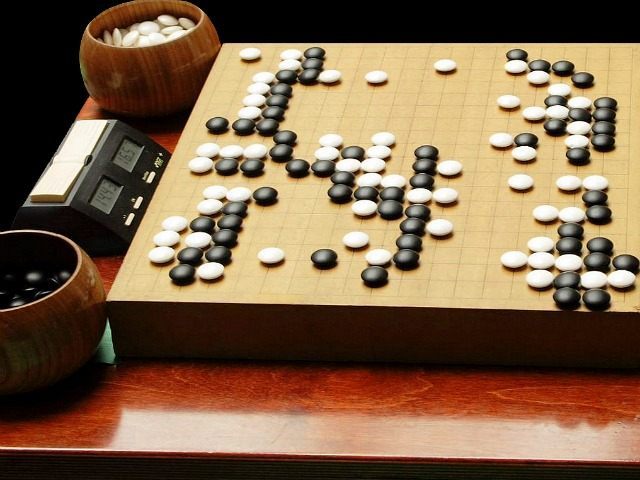

Google’s London-based DeepMind artificial intelligence has beaten Fan Hui at Go, the game’s European champion. It’s also won more than 99% of its games against competing AIs.

“This is a really big result, it’s huge,” according to French programmer Rémi Coulom. He tried to accomplished the same thing that DeepMind’s AlphaGo program has with a project called Crazy Stone, but previously believed AI mastery of the game was still years away.

The AI hasn’t been programmed explicitly to win at Go, however. It’s managed to learn the game itself, using a general purpose algorithm. It’s already done the same thing with nearly 50 other arcade games, but Go is a different beast. The game’s intricacies have eluded even the most sophisticated AI until now.

Before AlphaGo took on Fan Hui, it studied 30 million advanced piece positions, applying “deep learning” in its neural networks. Then it played itself fifty times, just to be sure. Previously, Go AIs just scanned samples of games that played roughly the same to the one in which they were currently embroiled. AlphaGo took it several steps forward — picking its moves and interpreting the board itself, rather than simply referencing a library of previous plays.

It might seem that learning to play Go is a trivial advancement, but the victory is a strong indicator of DeepMind’s forward progress. In fact, it’s a big step forward for artificial intelligence in general. It represents an advance in tasks that require long-term decisions on complex, multifaceted subjects. Consider that Go has more than a googol — that’s 10100 — potential moves in any given game, and you can see why this is a step up from IBM’s mastery of chess.

Fan Hui claims that if he hadn’t known, he would have believed that he was playing against another person. Perhaps “a little strange, but a very strong player, a real person,” he said. Toby Manning, the match’s referee, observed that the computer seemed to have taken up a very conservative, calculating style of play.

And the game of Go itself is emblematic of the future uses of artificial intelligence. The game dates back to at least 500 BC and is commonly believed to be related to the art and tactics of war. An AI that can master Go is one step closer to mastering the battlefield.

And while it’s impossible to know how far we are from having literal robotic warlords sending our troops into battle, it’s important to remember that very recently, a computer that could win at this millenia-spanning game was considered a comfortable decade away.

Follow Nate Church @Get2Church on Twitter for the latest news in gaming and technology, and snarky opinions on both.

COMMENTS

Please let us know if you're having issues with commenting.